Helps new mothers rebuild core strength and pelvic floor control —

through simple breathing and contraction exercises. Built 0–1 with AI.

Postpartum recovery is one of the most underserved areas in women's health. The apps that exist don't fit the reality — too complex, too unfocused, too easy to abandon. I saw the gap and decided to build something better.

Recovery after childbirth is hard enough. The apps that exist make it harder.

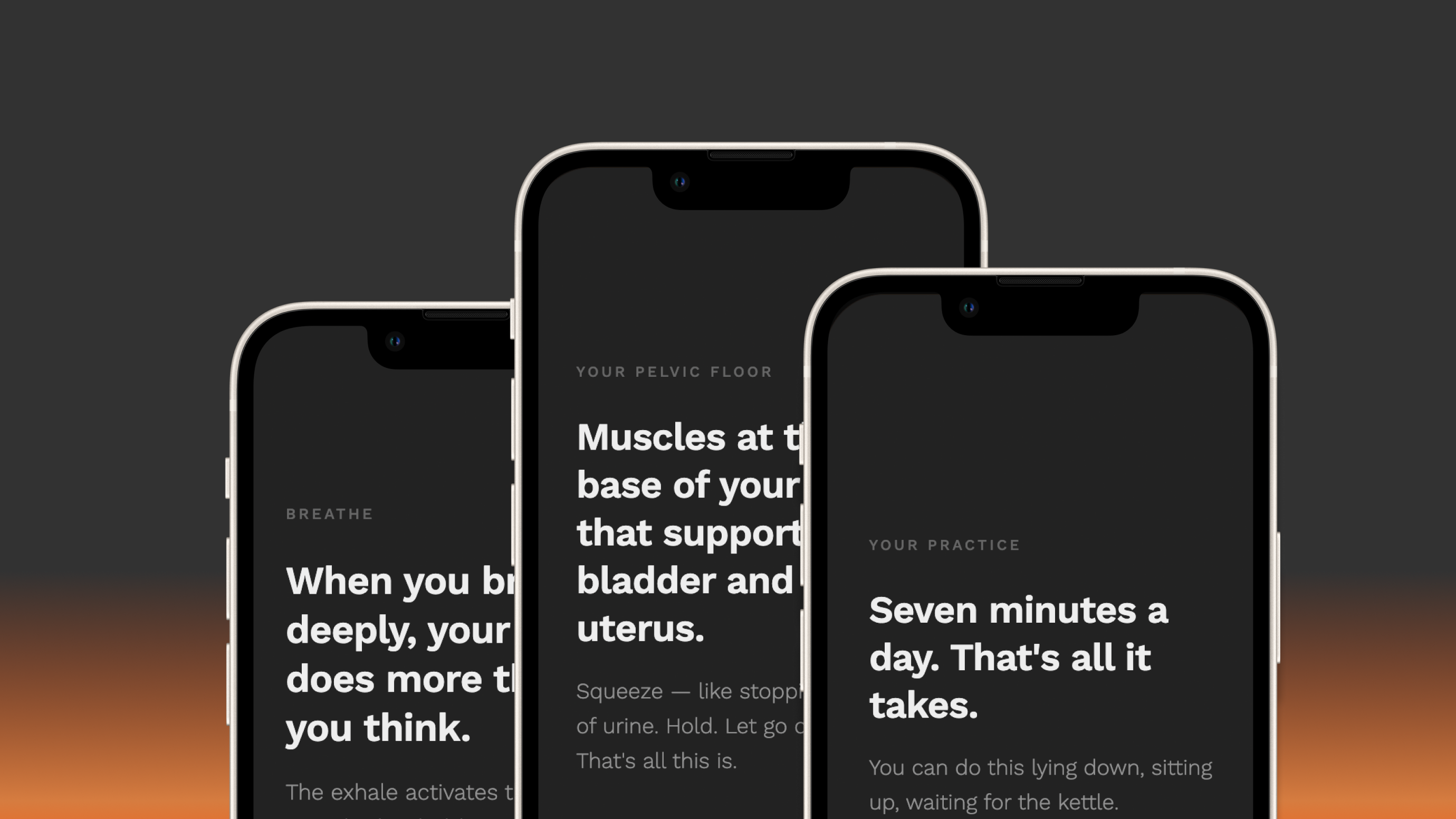

Breathing apps built for meditation, not recovery. Fitness apps that mix everything together, leaving users unsure what they're even training. The result: people stop — not from laziness, but because nothing feels designed for this specific moment. Exhausted, healing, with a newborn. Needing something that just starts.

Before any screen, the structure had to be clear.

The goal was to get real women using it — and collect honest feedback to keep improving. That shaped how I planned the release.

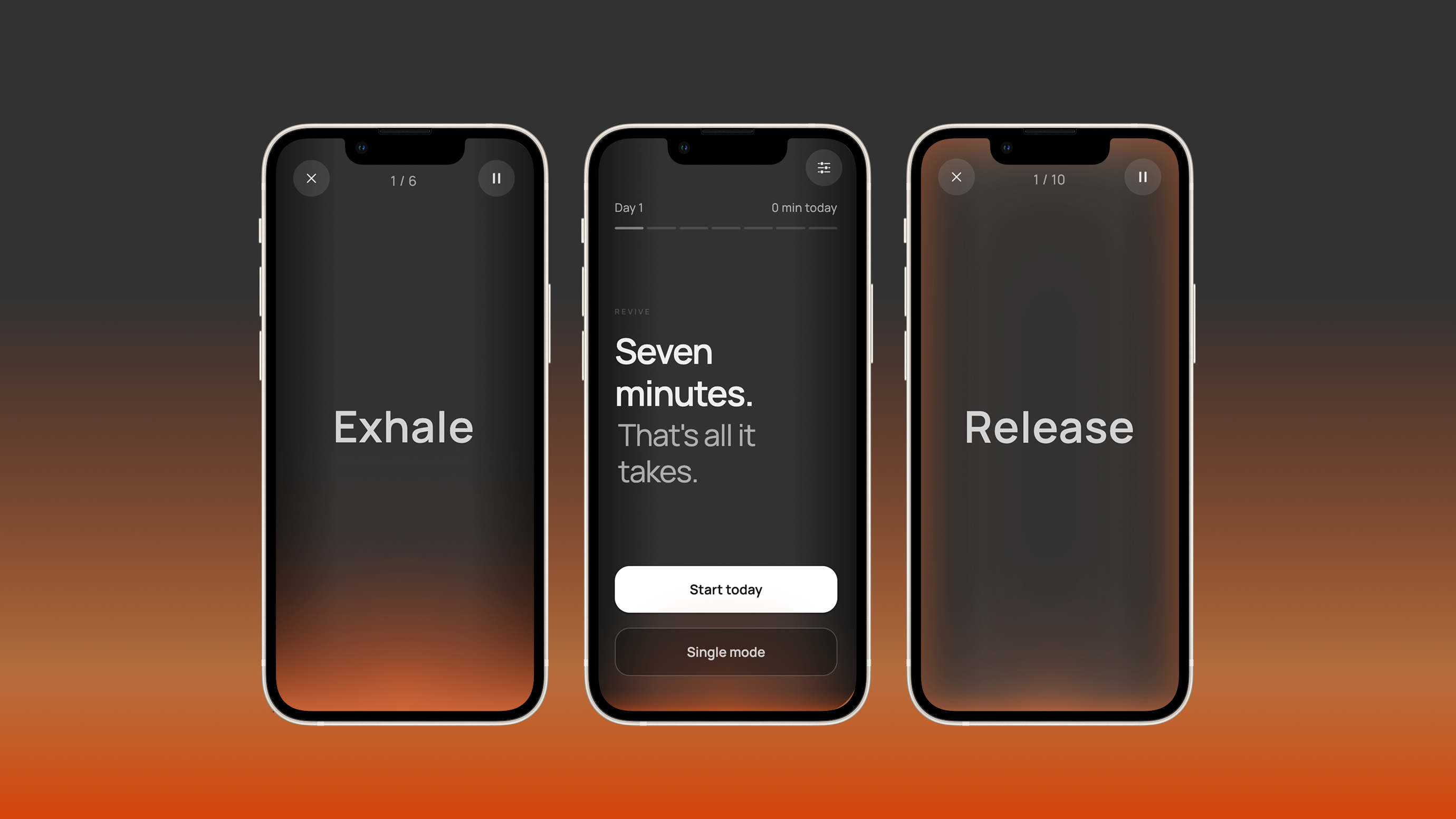

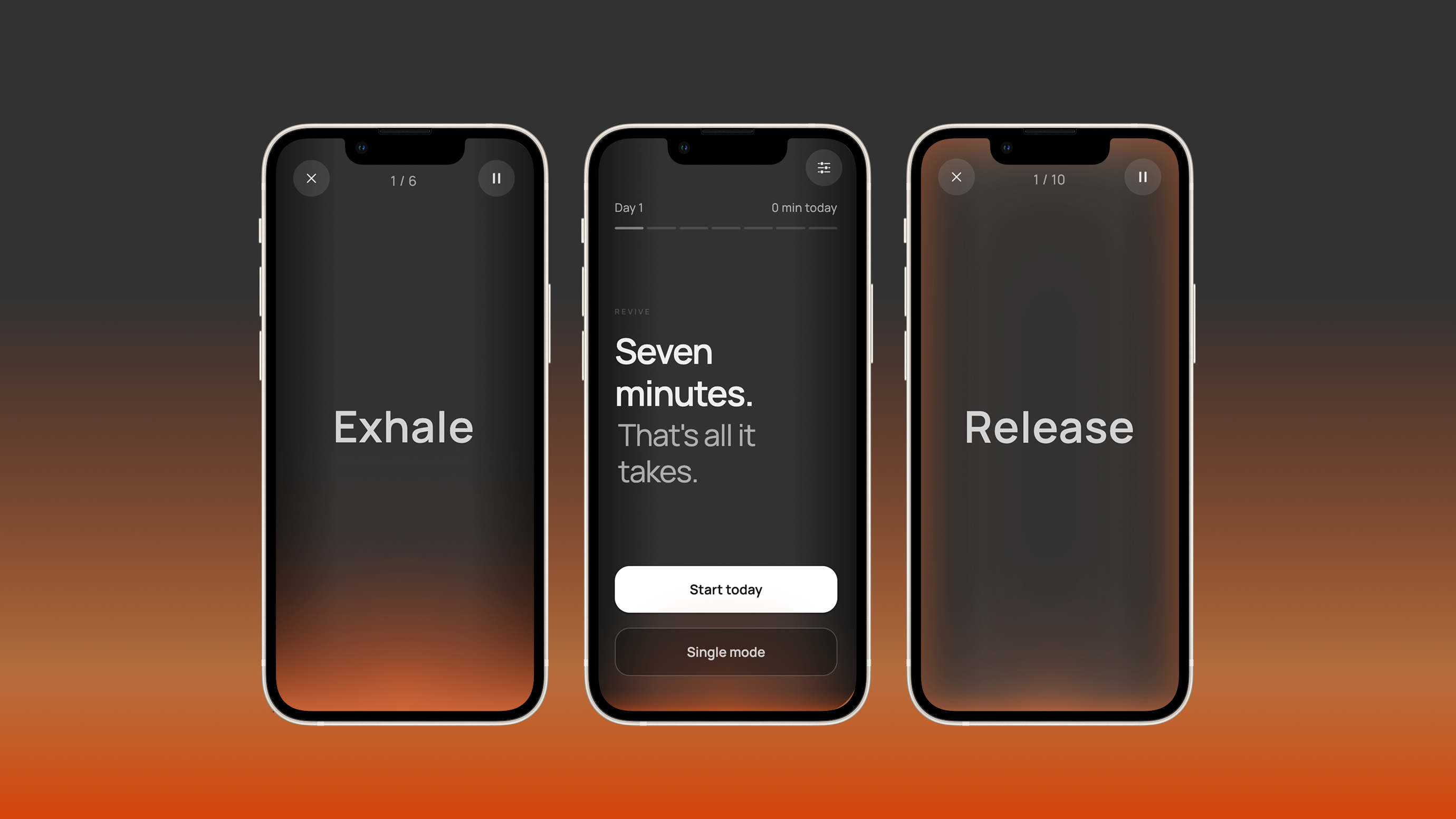

Start with the core: breathing and contraction. Strip everything else out — no login, no accounts, no points system. Just one thing done well: guide a session, remember it happened, make it easy to come back. Web first — fastest to ship, easiest to share, quickest to get feedback.

After gathering detailed feedback from real users, add onboarding and a subscription model, then bring it to the App Store.

Complete a session each day, unlock a new mode. No punishment for missing days. Just a reason to return — because postpartum life doesn't run on a perfect schedule.

The visual was the hardest part — and the most important. It's what would make Revive feel different from everything else out there.

My first idea was a 3D character. Something that feels alive — expands on inhale, contracts on exhale. I went deep on it: Midjourney for reference frames, Kling for animation sequences, Gemini for video generation. It took up most of the project time.

It didn't work. The animation needed to loop cleanly, match human breathing rhythm exactly, feel calm and premium, and run reliably on any device. No tool could do all four at once. The results were either visually unstable, off-rhythm, or too busy to feel restful.

Generative animation tools are useful for exploration, but not production-ready for rhythm-locked, device-stable motion. Knowing this earlier would have saved a lot of time.

So I changed direction. Light, not form. I sketched four animation concepts in Figma — a glowing sphere and a soft light, each in breathing and contraction versions. Having something I could point to and say this feeling, not that one made everything faster.

AI built the working demo quickly. The bigger surprise: it also generated the voice cues and synced the background music to the exercise rhythm — breathing pace, beat, and speech timing all aligned across four modes. What could have taken days of manual coordination took hours.

A rough Figma sketch gave AI more direction than hours of prompting alone. A clear reference changes everything downstream.

Once the visual direction was set, the product scope followed. First decision: what to cut.

Login, accounts, streak rewards, gamification — all out. Version one does one thing: run a 7-minute session, record that it happened, make it easy to come back. In any product, the hardest call isn't what to add. It's what to leave out.

Even the language needed care. Between Squeeze and Gather as the exercise cue word, I asked AI to check clinical sources and competitor apps. The answer came back fast: every physio resource uses Squeeze. It's universal because it's unambiguous — people know exactly what to do without being taught.

The audio came together the same way. Breathing rhythm, exercise beat, voice timing — three layers that needed to sync across four exercise modes. I described the full session logic, and AI built it as one connected system rather than three separate pieces.

Deployment took minutes. One HTML file, Vercel, one command. Live.

The speed. From visual exploration, to a locked direction, to synced audio and voice — it all happened within days. That matters because fast execution lets you stay inside the energy of a new idea. You ship before the momentum dies.

Every call that gave Revive its character — cutting features, dropping the 3D direction — still needed a human making the judgment. AI made execution faster. It didn't make the decisions.

As the project grows, keeping AI aligned with your design direction becomes the real work. Communicating intent clearly, maintaining visual consistency across versions, preventing drift — these are real design skills now. And once a visual language is established, the challenge is making sure AI changes things correctly within it, not around it.

The web app is live. Currently collecting user feedback.

Once there's enough to act on, the next round of work begins — refined visuals, stronger features, onboarding, subscription, and an iOS release.

This is a product in progress. The best version of Revive will be shaped by the women who use it.

Breathe · Floor Slow · Floor Fast · Together — each targeting a different stage of recovery.

Web now. iOS next — onboarding, subscription, and App Store release planned after user feedback.

From first sentence to deployed product. No spec, no team, no prior research.